The Large Model Systems Organization develops large models and systems that are open, accessible, and scalable.

Latest Blog

See all posts

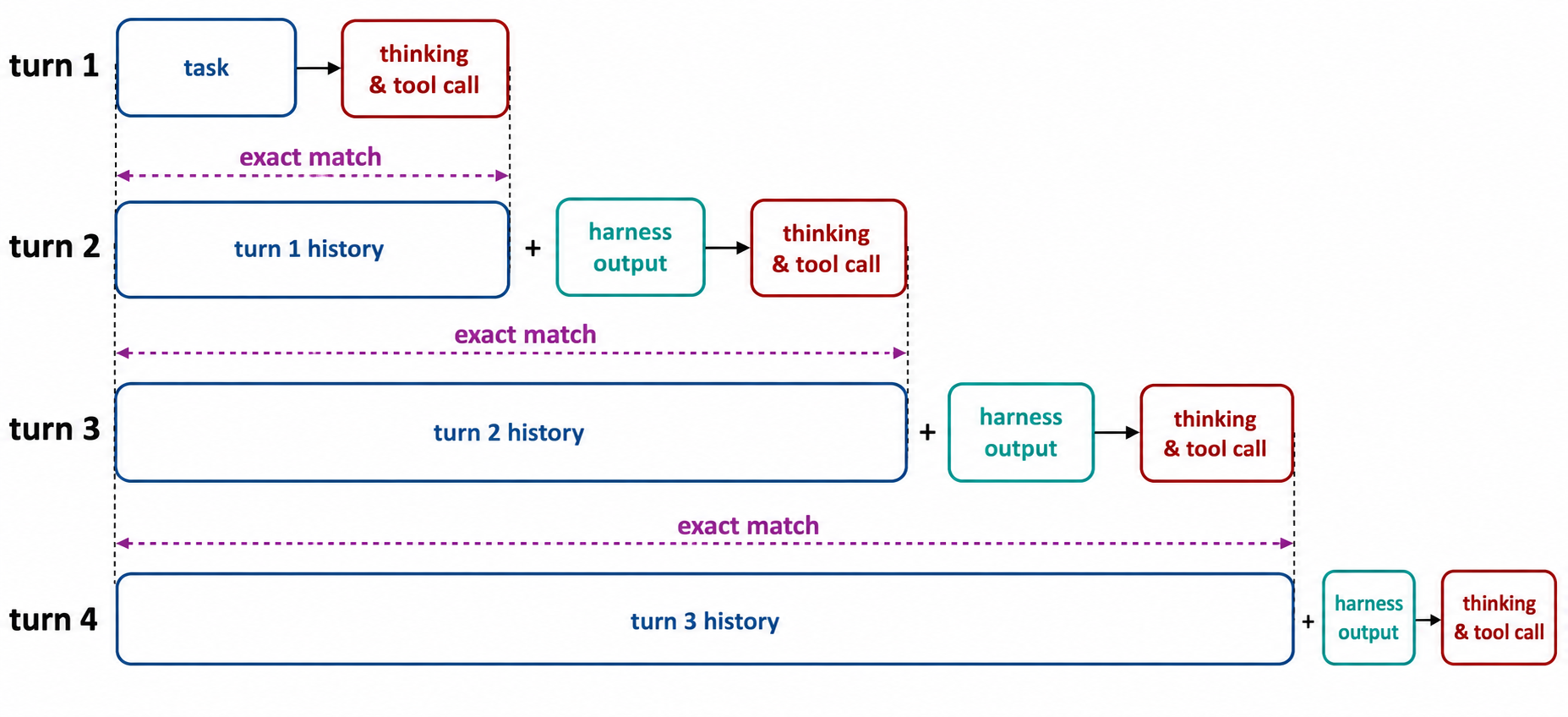

No Token Left Behind: Demystifying Token-In-Token-Out in Miles

In agentic RL, a rollout is not a single generation. It is a chain of model calls, tool outputs, harness messages, and resumed generations. Token-In-Token-Out (TITO) is a design principle that address...

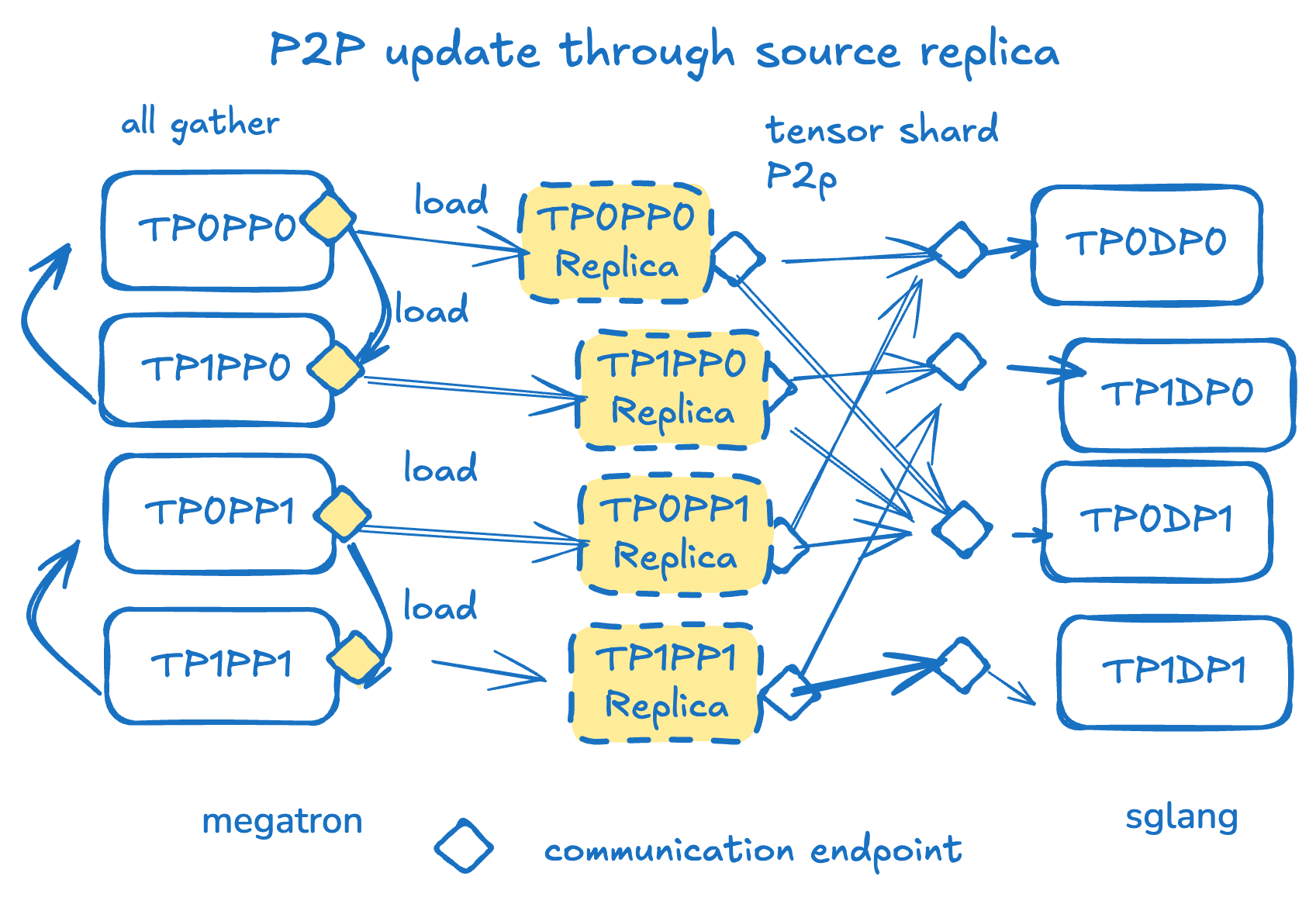

Updating 1T parameters in seconds — P2P weight transfer in Large Scale Distributed RL

We introduced a RDMA-based, Peer to Peer weight update mechanism for RL workloads in SGLang as a supplement to traditional NCCL broadcast methods, compatible with all major open source models. By util...

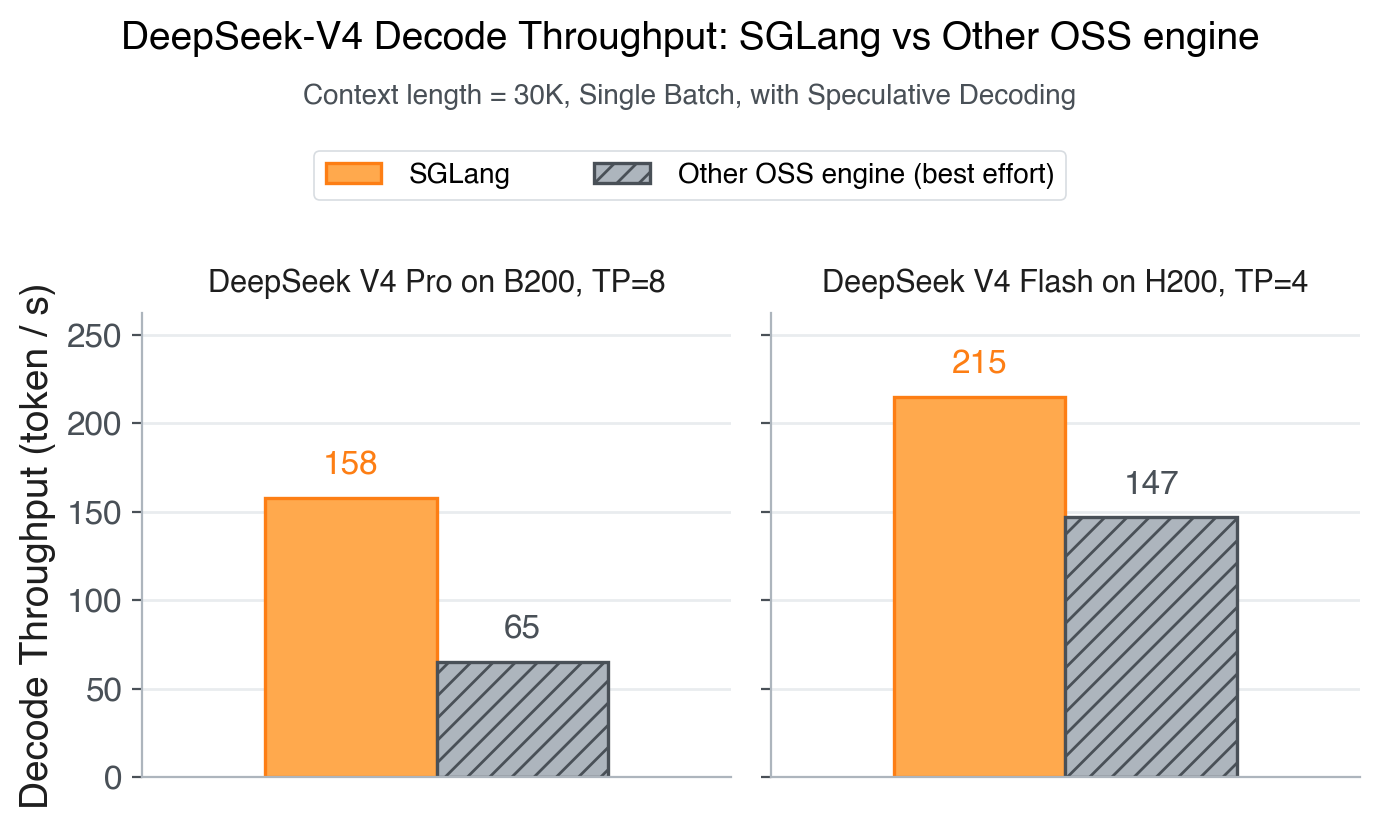

DeepSeek-V4 on Day 0: From Fast Inference to Verified RL with SGLang and Miles

We are thrilled to announce Day-0 support for DeepSeek-V4 across both inference and RL training. SGLang and Miles form the first open-source stack to serve and train DeepSeek-V4 on launch day — with s...

Projects

View all projectsOur Sponsors & Partners

Backed by leading companies and institutions advancing AI research.

Voltage Park, NVIDIA, Nebius, Google Cloud, AtlasCloud, a16z, AMD, InnoMatrix, Laude Institute, Hyperbolic, NovitaAI, Verda Cloud, Sky9, Kaggle, MBZUAI, Together, RunPod, Anyscale, HuggingFace